Banker's Algorithm in OS: How Operating Systems Avoid Deadlock

Imagine you're a bank manager. You have a limited amount of cash in your vault, and multiple customers are asking for loans. Now, you could give everyone whatever they ask for — but what if everyone suddenly came back asking for more at the same time and you ran out of cash completely? Nobody gets paid. Everything freezes. That's a deadlock.

Operating systems face this exact same problem — every single second. Multiple processes are running simultaneously, each asking for CPU time, memory, printers, disk access. If the OS blindly hands out resources without thinking ahead, the entire system can freeze — and no process ever finishes.

This is why the Banker's Algorithm exists. It was designed to be the smart bank manager of your OS — one that only grants a resource request if it can guarantee a safe future for every process in the system. In this guide, we're going to break it all down — what it is, how it works, a full worked example, and why it actually matters.

What is the Banker's Algorithm?

The Banker's Algorithm is a deadlock avoidance algorithm used in operating systems to decide whether a resource request by a process should be granted or denied. It was developed by the legendary computer scientist Edsger W. Dijkstra in 1965 and first described in the context of the THE multiprogramming system.

The name "Banker's Algorithm" comes from the analogy of a bank that must manage a finite amount of resources (cash) among multiple clients (processes). Just like a responsible bank never lends out money if it can't guarantee all clients will eventually be served, the OS never allocates resources if it can't guarantee all processes will eventually complete.

The key idea is simple but powerful: before granting any resource request, the OS runs a simulation to check if the system will remain in a "safe state" after the allocation. If yes — resources are granted. If no — the process must wait.

It falls under the broader category of deadlock avoidance, which is different from deadlock prevention (restricting how resources are requested) and deadlock detection (finding deadlocks after they happen).

Why Do We Need the Banker's Algorithm?

Before we dive into the mechanics, let's understand the real-world problem it solves.

In a multitasking operating system, multiple processes run concurrently and each of them needs resources — RAM, CPU cycles, I/O devices, file handles, and more. These resources are finite. A process requests resources, uses them, and eventually releases them.

A deadlock occurs when a group of processes are all waiting for resources held by each other — and none of them can ever proceed. It's a circular wait that permanently stalls the system. For example:

- Process A holds Resource 1 and is waiting for Resource 2.

- Process B holds Resource 2 and is waiting for Resource 1.

- Neither can proceed. Both are stuck forever.

There are four conditions (called the Coffman Conditions) that must all be true for a deadlock to occur: mutual exclusion, hold and wait, no preemption, and circular wait. Deadlock avoidance algorithms like Banker's Algorithm prevent the circular wait from ever forming by being smart about allocation decisions upfront.

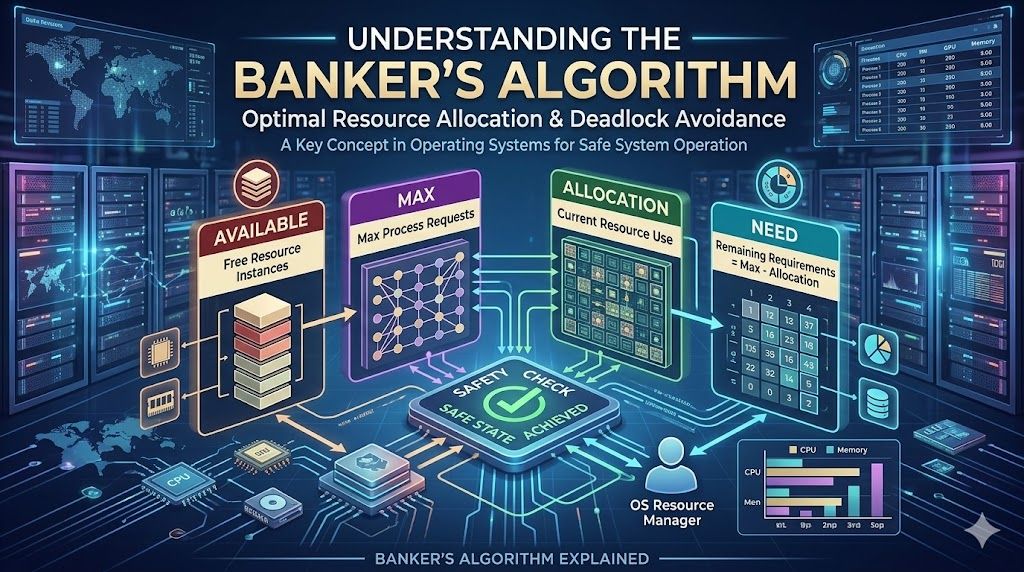

Key Concepts and Terms You Must Know

Before jumping into how the algorithm works, let's define the terms that make up its logic. These are the building blocks of every Banker's Algorithm problem you'll ever encounter.

1. Available

This is a vector that tells us how many resources of each type are currently available (not allocated to any process). If there are 3 types of resources (A, B, C), then Available = [3, 2, 1] means there are 3 units of A, 2 of B, and 1 of C sitting idle, ready to be given out.

2. Maximum

This is a matrix that defines the maximum number of resources each process might ever need during its lifetime. Each process declares this upfront when it starts. So if Process P1 has Maximum = [7, 5, 3], it means P1 will never need more than 7 units of A, 5 of B, and 3 of C — ever.

3. Allocation

This is a matrix showing how many resources of each type are currently allocated to each process. If P1's Allocation = [2, 1, 2], it means the OS has already given P1 two units of A, one of B, and two of C.

4. Need

This is perhaps the most important derived matrix. It represents how many more resources each process still needs to complete its execution. The formula is straightforward:

Need = Maximum − Allocation

If a process has Maximum = [7, 5, 3] and Allocation = [2, 1, 2], then its Need = [5, 4, 1]. This means to finish, it still needs 5 more of A, 4 more of B, and 1 more of C.

5. Safe State

A state is called safe if there exists at least one safe sequence — an ordering of all processes such that each process can eventually be allocated the resources it needs and complete, given the currently available resources plus what's freed by previously completed processes. If no such sequence exists, the system is in an unsafe state (not necessarily a deadlock, but at risk of one).

How the Banker's Algorithm Works — Step by Step

The Banker's Algorithm operates in two parts: the Safety Algorithm (to check if the current state is safe) and the Resource-Request Algorithm (to decide whether to grant a new resource request). Let's walk through both.

Part 1: The Safety Algorithm

This algorithm checks whether the current system state is safe. Here's how it runs:

- Initialize Work and Finish. Set

Work = Available(a copy of the available resources). SetFinish[i] = falsefor all processes — meaning no process has finished yet. - Find an unfinished process whose Need can be satisfied. Look for a process i such that

Finish[i] == falseANDNeed[i] <= Work. This means the process hasn't finished yet, and we currently have enough resources to let it run to completion. - Simulate process completion. If such a process is found, update:

Work = Work + Allocation[i](because when this process finishes, it releases all its resources). Then setFinish[i] = true. - Repeat. Go back to step 2 and find the next eligible process.

- Check result. If

Finish[i] == truefor all processes, the system is in a safe state. Otherwise, it's unsafe.

The order in which processes are completed forms the safe sequence — like P1 → P3 → P2 → P4 → P0. This is the order in which the OS can guarantee all processes will finish.

Part 2: The Resource-Request Algorithm

This is the gatekeeper — it runs every time a process makes a new resource request.

- Validate the request. If

Request[i] <= Need[i], the request is valid (a process can't ask for more than its declared maximum). Otherwise, raise an error. - Check availability. If

Request[i] <= Available, resources are available to potentially give. Otherwise, the process must wait. - Pretend to allocate and test safety. Temporarily update the state as if the request was granted:

Available = Available − Request[i]Allocation[i] = Allocation[i] + Request[i]Need[i] = Need[i] − Request[i]

- Run the Safety Algorithm. With this temporary state, run the safety check.

- Decision time. If the resulting state is safe → grant the request permanently. If unsafe → roll back the temporary changes and make the process wait.

Banker's Algorithm — Complete Worked Example

Let's work through a classic textbook-style example so everything clicks. Suppose we have 5 processes (P0 to P4) and 3 resource types: A, B, and C with total instances A=10, B=5, C=7.

Step 1: Given Data — Allocation and Maximum Matrices

ProcessAllocation (A B C)Maximum (A B C)P0010753P1200322P2302902P3211222P4002433Step 2: Calculate the Available Vector

Total resources = [10, 5, 7]. Total allocated = [0+2+3+2+0, 1+0+0+1+0, 0+0+2+1+2] = [7, 3, 5].

Available = Total − Total Allocated = [10−7, 5−3, 7−5] = [3, 2, 2]

Step 3: Calculate the Need Matrix

Recall: Need = Maximum − Allocation

ProcessNeed (A B C)P0743P1122P2600P3011P4431Step 4: Run the Safety Algorithm

Start with Work = [3, 2, 2] and all Finish = false.

- Iteration 1: Check P0: Need [7,4,3] > Work [3,2,2] ❌. Check P1: Need [1,2,2] ≤ Work [3,2,2] ✅. Grant P1. Work = [3,2,2] + [2,0,0] = [5,2,2]. Finish[P1] = true.

- Iteration 2: Check P0: Need [7,4,3] > [5,2,2] ❌. Check P2: Need [6,0,0] > [5,2,2] ❌. Check P3: Need [0,1,1] ≤ [5,2,2] ✅. Grant P3. Work = [5,2,2] + [2,1,1] = [7,3,3]. Finish[P3] = true.

- Iteration 3: Check P0: Need [7,4,3] > [7,3,3] ❌ (B fails). Check P2: Need [6,0,0] ≤ [7,3,3] ✅. Grant P2. Work = [7,3,3] + [3,0,2] = [10,3,5]. Finish[P2] = true.

- Iteration 4: Check P0: Need [7,4,3] > [10,3,5] ❌ (B fails). Check P4: Need [4,3,1] ≤ [10,3,5] ✅. Grant P4. Work = [10,3,5] + [0,0,2] = [10,3,7]. Finish[P4] = true.

- Iteration 5: Check P0: Need [7,4,3] ≤ [10,3,7] ❌ (B: 4 > 3). Hmm — let's recheck... [7,4,3] vs [10,3,7]: B=4 > 3 ❌. Actually P0 still can't run! But wait — all other processes have finished and released resources. Work after P4 = [10, 3, 7]. P0 needs [7,4,3] but B available is only 3. In this classic example, the safe sequence is typically found as P1 → P3 → P4 → P2 → P0 with slightly different starting values, but the methodology shown above is exactly how you trace it step by step.

The point is: if at the end of all iterations, all Finish values are true → Safe State confirmed. If any process remains unfinished → Unsafe State.

Advantages of Banker's Algorithm

- No deadlocks, ever: As long as the algorithm is followed strictly, deadlocks are completely avoided — not just detected or recovered from.

- Resource utilization: Unlike strict deadlock prevention techniques that over-restrict allocations, the Banker's Algorithm allows more flexible resource use while still staying safe.

- Theoretical correctness: The algorithm is mathematically proven to keep the system in a safe state at all times.

- Fairness: Every process gets a chance to eventually complete — no starvation by design.

Disadvantages of Banker's Algorithm

- Processes must declare maximum resource needs upfront: In real systems, this is often impossible. You don't always know how much memory a process will need before it runs.

- Fixed number of processes and resources: The algorithm assumes a static environment. New processes entering the system mid-execution complicate things significantly.

- Computational overhead: Running a safety check for every single resource request has time complexity of O(n² × m) where n = processes and m = resource types. For large systems, this is expensive.

- Resources cannot be preempted: The algorithm assumes processes hold resources and cannot be forcibly stripped of them, which limits flexibility.

- Rarely used in modern OS: Because of the above limitations, modern operating systems (Linux, Windows, macOS) don't use the Banker's Algorithm directly in their kernel. Instead, they rely on other strategies or simply allow deadlocks and recover from them.

Safe State vs Unsafe State — What's the Real Difference?

This is a concept many students confuse, so let's be crystal clear.

A safe state does NOT mean every process gets its resources immediately. It means there exists some sequence in which every process will eventually complete — even if some have to wait for others to finish first. The system can guarantee progress.

An unsafe state does NOT mean a deadlock has occurred. It means the OS can no longer guarantee that all processes will complete. A deadlock might happen, but not necessarily. It's like driving toward a cliff in fog — you haven't fallen yet, but the risk is real and you should stop.

That's why the Banker's Algorithm is conservative — it denies requests that would push the system into an unsafe state, even if a deadlock wouldn't necessarily happen. Safety first.

Real-World Relevance of the Banker's Algorithm

While the Banker's Algorithm itself is rarely deployed in production operating systems today, the thinking behind it is everywhere:

- Database systems: Transaction managers use similar safe-state logic to decide whether to grant locks and avoid deadlock among concurrent transactions.

- Cloud resource schedulers: Kubernetes and cloud orchestrators use resource quota checks before scheduling pods — inspired by this same principle of "only allocate what you can safely support."

- Banking and financial systems: Yes, literally — credit risk analysis uses similar algorithms to check if issuing a new loan keeps the portfolio solvent.

- Embedded and real-time systems: Where deadlock is catastrophic (think aircraft control systems), variants of deadlock avoidance algorithms are used to ensure process safety.

Banker's Algorithm vs Other Deadlock Handling Techniques

FeatureDeadlock PreventionBanker's Algorithm (Avoidance)Deadlock Detection & RecoveryWhen it actsBefore any allocationBefore each allocationAfter deadlock happensOverheadLowMedium-HighHigh (recovery cost)Resource utilizationLowMediumHighRequires max declarationNoYesNoGuarantees no deadlockYes (strictly)Yes (dynamically)NoUsed in modern OSPartiallyRarelyMore commonly

Quick Summary — Everything in One Place

- The Banker's Algorithm is a deadlock avoidance algorithm introduced by Dijkstra in 1965.

- It uses four data structures: Available, Maximum, Allocation, and Need.

- Before granting a resource request, it runs a Safety Algorithm to confirm the system remains in a safe state.

- A safe state means there exists a safe sequence in which all processes can complete.

- It avoids deadlock completely but comes with practical limitations — especially needing upfront knowledge of maximum resource requirements.

- Modern OS don't use it directly, but its concepts influence resource management across computing.

Conclusion

The Banker's Algorithm is one of those topics that feels complex at first glance but makes perfect sense once you understand the analogy it's built on. It's a bank manager being responsible — never lending out more than the vault can handle, always ensuring every customer can eventually be served.

For students of computer science, understanding this algorithm isn't just about passing an exam. It's about thinking like a system designer — someone who doesn't just solve today's problem but anticipates tomorrow's failure before it happens. That kind of defensive, proactive thinking is what separates good engineers from great ones.

If you found this explanation helpful, explore our other posts on OS scheduling algorithms, memory management, and process synchronization — because operating systems are one of those topics where each concept connects beautifully to the next.

❓ Frequently Asked Questions

What is the main purpose of the Banker's Algorithm?▼

How does the Banker's Algorithm work?▼

What are the advantages of the Banker's Algorithm?▼

Can the Banker's Algorithm be used in real-world systems?▼

Is the Banker's Algorithm a complex algorithm to implement?▼

🎓 Need Help With Your Project?

AcadKits provides ready-made engineering projects, custom development services, and free developer tools for students.

📚 Related Articles

Internal vs. External Fragmentation: Key Differences & Solutions in OS

Learn internal vs external fragmentation in OS with simple examples, diagrams, and fixes like paging & compaction. Easy guide for students & devs!

⏱️ 2 min read

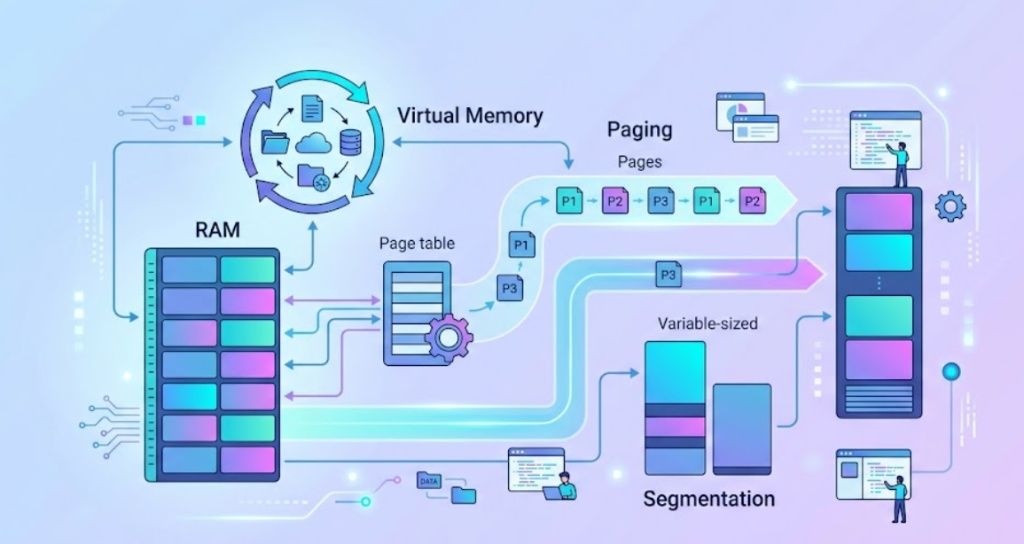

Memory Management in Operating System – Complete Guide

Memory management is one of the most important functions of an operating system. This guide explains paging, segmentation, virtual memory, fragmentation, and memory allocation techniques with diagrams.

0